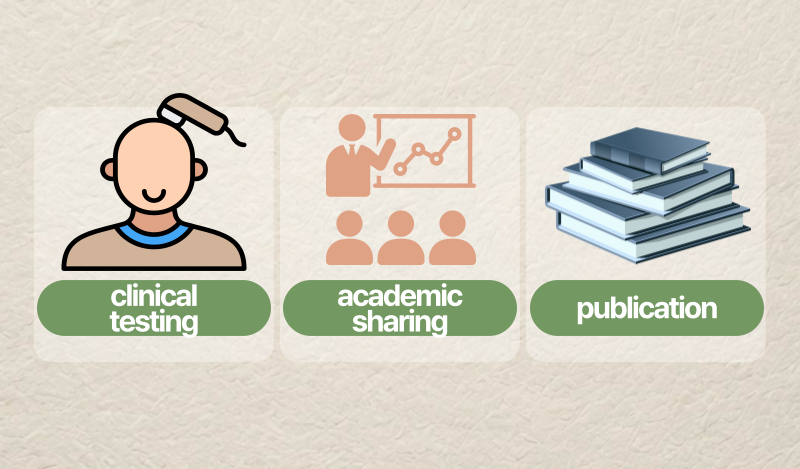

In medical aesthetics and hair care, credibility rarely comes from a single moment. It is usually built through continuity – a sequence of clinical testing, academic sharing, and publication that makes the work visible to professional scrutiny.

That is the story of SGF57, often described as the original blueprint behind AesMed’s later evidence-driven approaches, including AGF39. In this final post of our three-part series, we focus on how conference posters (2013 and 2014) and peer-reviewed publication together form a practical “evidence chain.”

This is not a claim that conference posters are the same as large-scale randomized trials. They are not. But when read responsibly alongside peer-reviewed research they can show how a clinical concept evolves, how parameters are refined, and how a team learns in public.

Why Conference Evidence Matters in Medical Aesthetics

Conference posters occupy a useful space between daily clinical practice and peer-reviewed journals.

- Journals typically emphasize methodological detail, formal analysis, and limitations.

- Clinics emphasize real-world feasibility and patient variability.

- Conferences often bridge the two: early signals, practical insights, and “what we learned next.”

In the SGF57 evidence trail, conference posters functioned as checkpoints. They helped expand the story beyond a small pilot setting and highlighted which protocol elements might deserve optimization.

When a treatment concept is discussed across multiple scientific venues over time, it can indicate something important: a commitment to transparency and iterative improvement rather than one-time promotion.

2013: Scaling Clinical Experience (Broader Patient Insights)

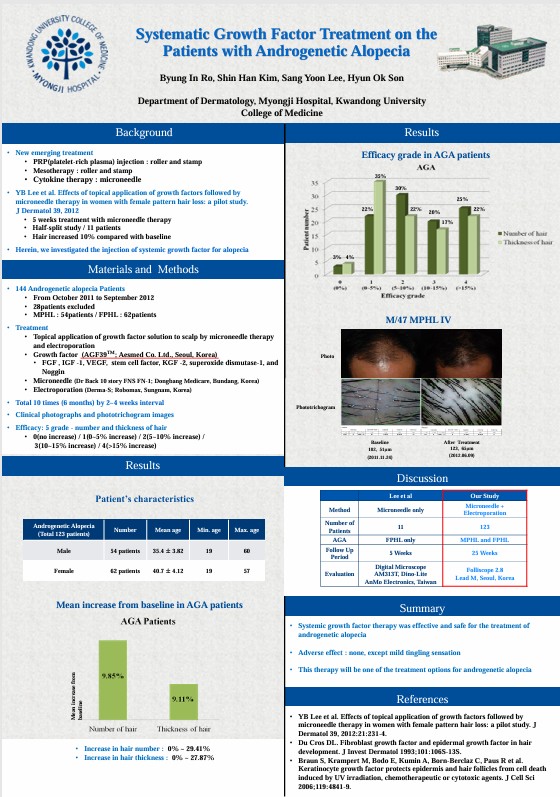

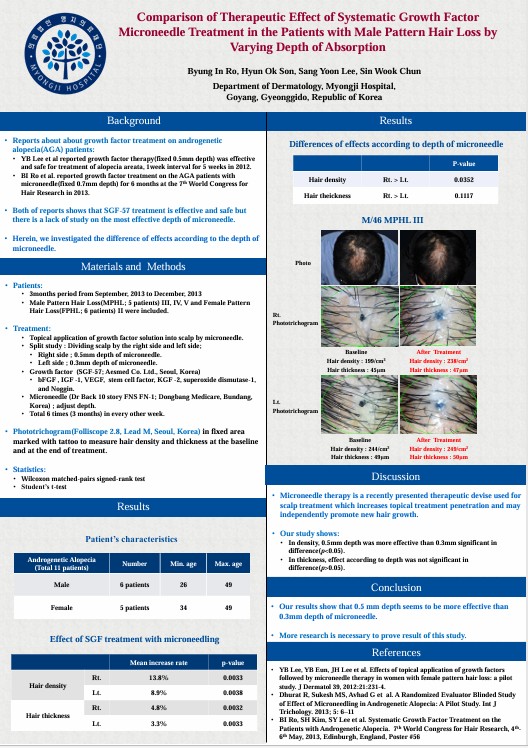

The 2013 WCHR poster summarized a broader clinical experience related to androgenetic alopecia (AGA) across male and female patients.

What the poster described (high-level)

The poster describes a protocol involving:

- topical application of a growth factor solution

- use of microneedle therapy and electroporation

- repeated sessions over months

- evaluation using standardized photography and phototrichogram-based tools

From a credibility perspective, this poster adds value not by being “final proof,” but by expanding what a pilot study cannot:

- more patients

- longer clinical timelines

- greater exposure to real-world variability

Why scale matters

A small pilot study can show early promise, but it cannot reveal the full range of clinical response patterns. As scale increases, clinicians begin to observe:

- differences by baseline severity

- differences in response speed

- variability in patient satisfaction

- practical elements (visit frequency, tolerability, adherence)

The 2013 poster also includes a simple safety statement: no adverse effects except mild tingling sensation.

This kind of reporting does not replace formal adverse-event registries, but it does suggest that, in routine clinical use across multiple sessions, the approach was generally tolerated.

What we should and should not conclude

A responsible read of the 2013 poster is:

- It supports feasibility and tolerability over multiple sessions.

- It suggests that measurable changes were being tracked with phototrichogram methods.

- It does not, by itself, establish definitive efficacy, because posters are summarized formats and may not provide full statistical detail.

In other words: scale expands learning, but it also requires careful interpretation.

2014: Optimizing the Details (Needle Depth and Outcomes)

If 2013 was about “scaling,” 2014 was about “refining.”

A key practical question emerged in microneedling-based scalp delivery:

How deep should the microneedles be to maximize outcomes while preserving comfort and safety?

What the 2014 poster tested

The 2014 WCHR poster evaluated microneedle depth using a split-scalp design:

- one side treated at 0.5 mm

- the other side treated at 0.3 mm

- outcomes measured using phototrichogram in a fixed, tattoo-marked scalp area

This matters because it shows an intent to move from “we do microneedling” to “we define and optimize microneedling parameters.”

What was observed

The poster reports:

- both sides improved after treatment

- hair density improved more significantly on the 0.5 mm side

- hair thickness differences between depths were limited (not statistically significant)

This is exactly how protocol optimization should look in credible clinical work:

- test one variable

- keep the rest consistent

- measure outcomes objectively

- report both what changed and what did not

Why “what did not change” matters

It is tempting in marketing to highlight only positive signals. But credibility increases when evidence includes nuance:

- “density improved more at 0.5 mm”

- “thickness did not differ meaningfully between depths”

That second statement is not a weakness, it is a sign of honest reporting and a reminder that different outcome metrics can respond differently.

What the poster itself suggests next

The 2014 poster concludes that 0.5 mm seems more effective than 0.3 mm and notes the need for further research.

This is a practical bridge to future studies: larger samples, longer follow-up, and more refined outcome measures.

What Peer-Reviewed Publication Adds (Why Journals Matter)

Conference posters can show direction, but peer-reviewed publications provide a different kind of value: methodological transparency.

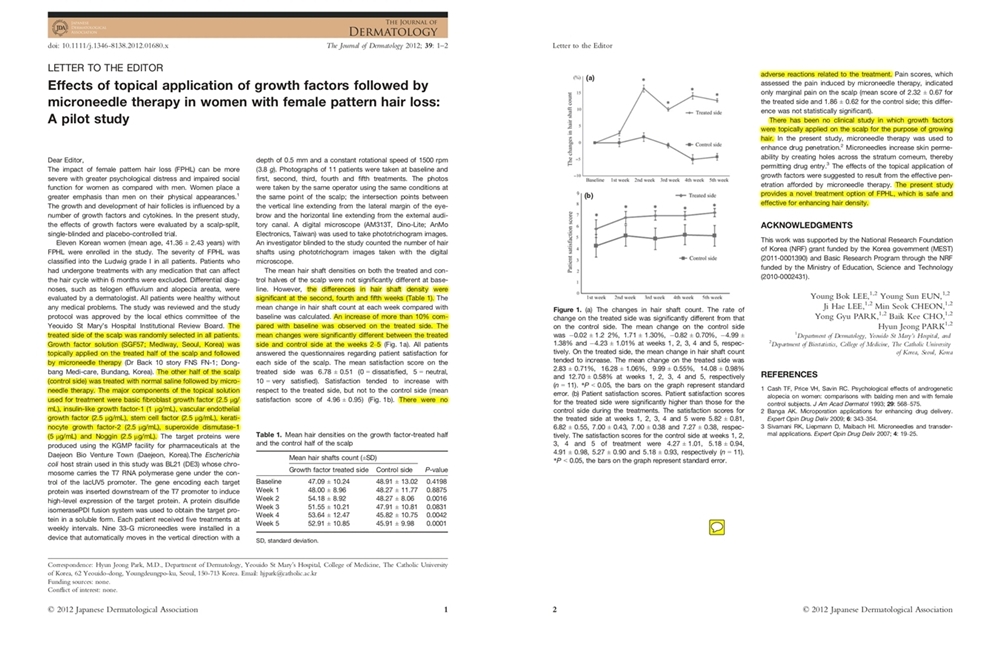

The 2012 peer-reviewed pilot study provided details such as:

- study design (scalp-split, single-blinded, placebo-controlled)

- participant characteristics (FPHL)

- protocol (0.5 mm depth, weekly x 5)

- measurement method (phototrichogram hair shaft counts by a blinded investigator)

- safety and pain reporting

It also reported key signals such as:

- >10% increase vs baseline observed on the treated side

- significant differences at specific time points

- no adverse reactions related to treatment

This publication acts as the anchor of the evidence chain: it makes the study readable, reviewable, and citable by others.

Evidence Timeline: 2012–2014 (Timeline + Checkpoints)

Here is the credibility logic as a timeline with checkpoints:

- 2012 (Journal checkpoint): protocol + measurement + safety reported with peer-review transparency

- 2013 (Conference checkpoint): broader patient insights and multi-session feasibility signaled

- 2014 (Conference checkpoint): protocol variable (depth) tested, density signal clarified, limitations acknowledged

This is what “verification continuity” looks like in practical clinical innovation: not one dramatic claim, but multiple steps of testing, sharing, and refining.

How This Legacy Supports AGF39’s Reliability (Ethical Bridge)

SGF57 is often described as the original blueprint behind AGF39 because it represents an early, structured attempt to connect:

- a multi-factor concept

- a defined delivery method

- objective measurement

- and academic disclosure

To be clear, this does not mean that every product iteration produces identical outcomes, or that the same results apply to every patient. It means that the evidence-first philosophy – defined methods, measurable outcomes, and transparent communication – has been present since the SGF57 era.

That philosophy is a large part of what readers and professionals often mean when they talk about “reliability.”

AGF39 is built on the same evidence-first philosophy: the belief that trust is earned through protocols, measurements, and responsible reporting not through overconfident promises.

Limitations and Why Follow-up Matters

A credibility story becomes stronger when it includes limitations. Across 2012–2014, several practical limitations are important:

- small sample size in pilot and parameter-comparison studies

- poster formats provide summarized data and may lack full methodological detail

- longer-term durability and subgroup analysis require larger, controlled follow-up

These limitations are not reasons to dismiss the evidence; they are reasons to interpret it appropriately and to continue building it.

That is the real value of continuity: it keeps the conversation open, measurable, and improvable.

Subscribe to AesMed’s blog to follow future evidence-based insights on hair care protocols, measurement methods, and responsible clinical communication.

“Some of the images were created using Miricanvas and Gemini.”

SGF57: The Original Blueprint Behind AGF39 – Series Part 1

click

The Clinical Study Behind the Original SGF57 Formula - Series Part 2

click